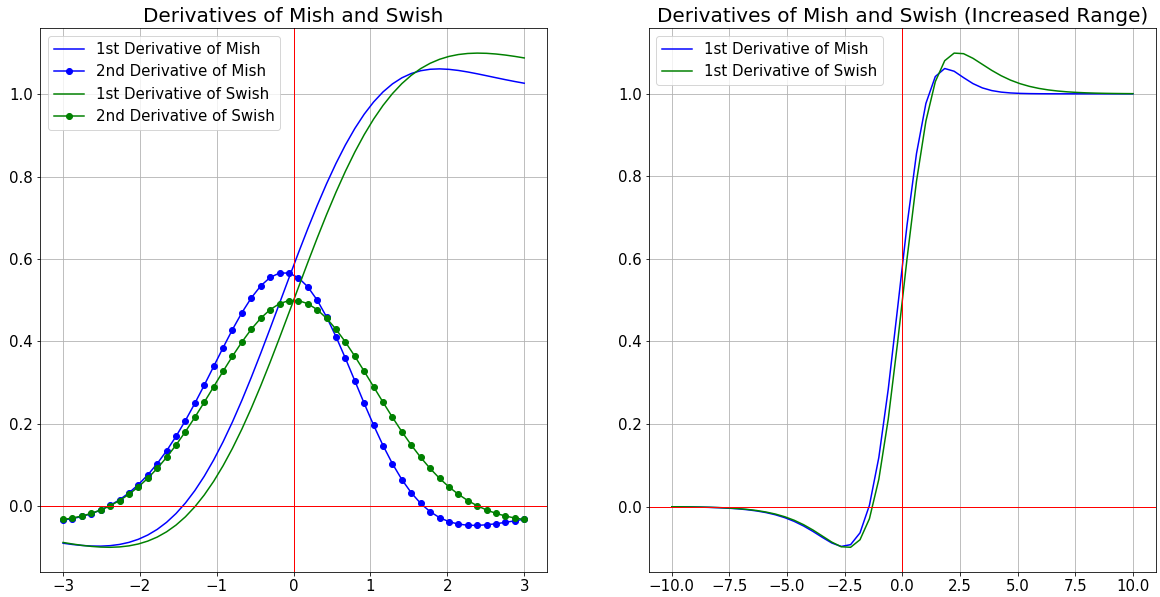

We will come with interview guide on next topic, till then enjoy reading our other articles. Let us know your feedback on this article and share us your comments for improvements. We hope this helps to refresh your knowledge on this topic. Hope that we have covered most of the topics related to this topic we have chosen. Thanks for staying there and reading this article of “ Activation Functions“. But mostly industry prefers Sigmoid and ReLU more as these have performed better than others. Hence, we need to train our model with different activation functions and observe for performance. Activation Functions are one of the Hyper-Parameter Tuning in Deep Learning networks which means we cannot judge which activation function will give better result in accuracy. Activation Functions are still active research topic and there are many more functions being discovered and used in Deep Learning Techniques. Thus said, we have come to end of this long article. Output Layer: This layer brings up the information learned by the network to the outer world.ĭerivatives of Activation Functions Conclusion Hidden layer performs all sort of computation on the features entered through the input layer and transfer the result to the output layer. Google brain invented an activation function called Swish and defined as f(x) xSigmoid (x). Nowadays, there are many activation functions, but the well-known is the rectified linear unit (ReLU). The mathematical expression is: And the derivative of softplus is: Swish function. Hidden Layer: Nodes of this layer are not exposed to the outer world, they are the part of the abstraction provided by any neural network. Activation function is the heart of the neural network and its impact is different from one to another. The derivative of the softplus function is the logistic function. It provides information from the outside world to the network, no computation is performed at this layer, nodes here just pass on the information to hidden layer. Input layer: This layer accepts input features. Sometimes the activation function is called a “ transfer function” and many activation functions are nonlinear. The purpose of the activation function is to introduce non-linearity into the output of a neuron.Īn activation function in a neural network defines how the weighted sum of the input is transformed into an output from a node or nodes in a layer of the network. Below is a short explanation of the activation functions available in the tf.keras.activations module from the TensorFlow v2.10.0 distribution and torch.nn from PyTorch 1.12.1.Activation function decides whether a neuron should be activated or not by calculating weighted sum and further adding bias with it. Activation FunctionsĬurrently, there are several types of activation functions that are used in various scenarios.

For this reason, the function and its derivative must have a low computational cost. The derivative of the activation function feeds the backpropagation during learning. This activation function will allow us to adjust weights and biases. When we started using neural networks, we used activation functions as an essential part of a neuron. For a neuron to learn, we need something that changes progressively from 0 to 1, that is, a continuous (and differentiable) function. This value is unreal because, in real life, we learn everything step by step. The tangent of the activation function indicates whether the neuron is learning From the previous image, we deduce that the tangent at x=0 is \infty.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed